Rules to Better Research & Development

Do you understand the challenges of R&D?

When a visit comes from an R&D auditor, they will want to check on what work you have ‘experimented’ with. An experiment, in R&D terms, translates to an iterative series of changes over the same piece of code.

That means you will need to be able to show code you wrote that didn’t work. If you didn’t check that in at some stage, you are in trouble.

Video: R&D in Software Development (9 min)Forgetting to record failed experiments

Unfortunately for R&D, when a software developer is making changes to a system they do one of two things. Either it is correct and the work is committed then checked in. Or, it doesn't meet the spec and they revert the changes and try again.

This means you need to make drastic changes to your internal processes, recording a bunch more information in order to satisfy the R&D criteria.

Forgetting to record evidence of what was available at the time

Additionally, R&D requires you show the work you create is generating new knowledge for the industry as a whole. This means, you need to show that the code you’ve written, wasn’t already written by somebody else.

While developers communicate, there can be a lot of internal discussion and small decisions made. These discussions can be either in person, email or IM and all of these discussions could be required to prove your claim.

The last thing you want to do is read through old employee inboxes and conversations.

Do you copy email content to PBIs?

While working on a task or PBI, it is very important that you save any discussions or contextual information related to the work completed. This helps for future understanding of what happened as well as providing relevant documents that support your research claims.

It is a good practice to also include screenshots into the PBIs.

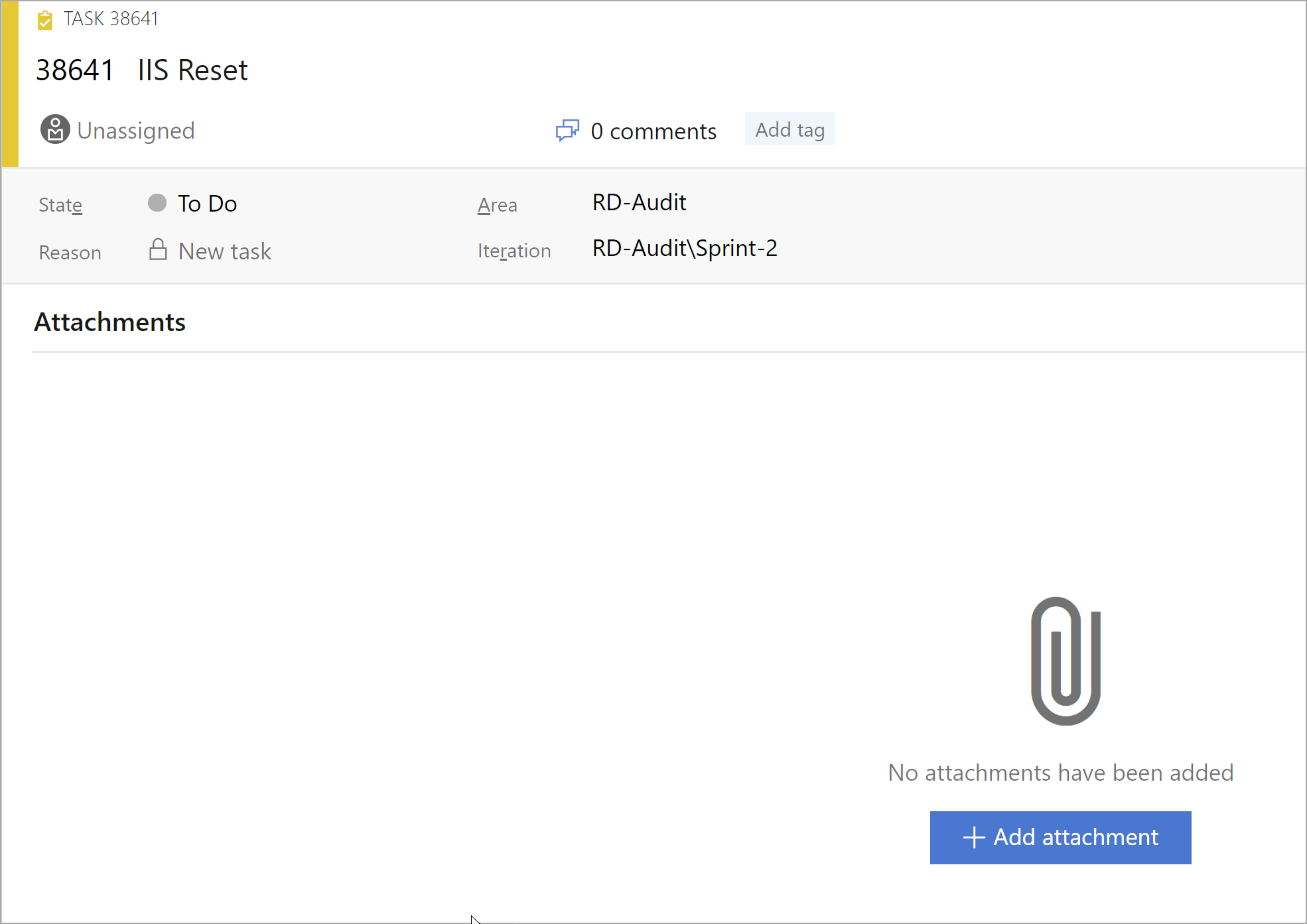

❌ Figure: Bad example - An important task is missing context

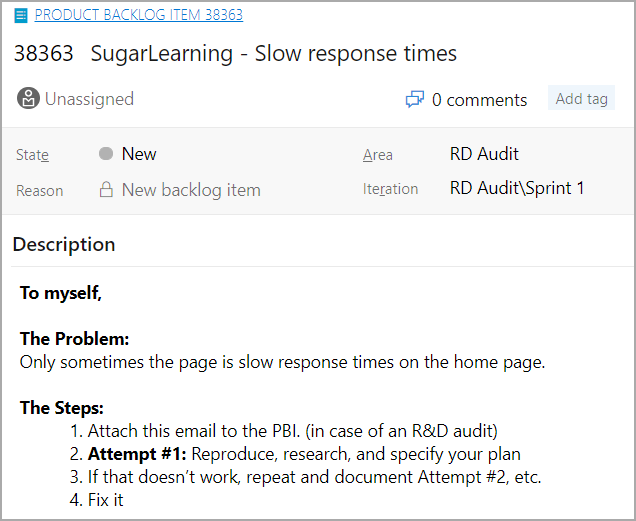

✅ Figure: Good example - Email is copied to the description

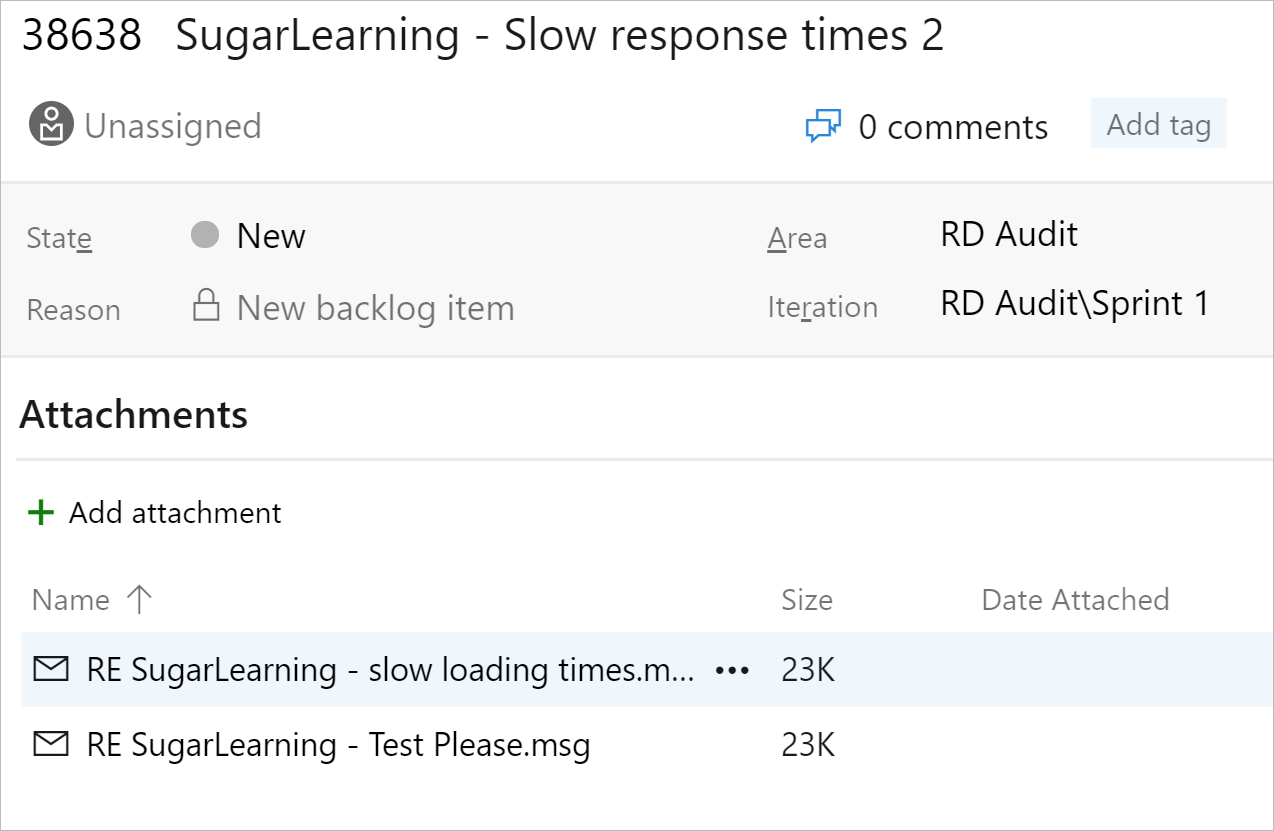

✅ Figure: Good example - Related emails are attached to the PBI

When attaching an email to the PBI, consider whether or not a response to the email is required. The sender will usually expect a response when the issue is resolved. If a response is required, update the Acceptance Criteria with a note. E.g. "Send a 'done' email in reply to the original email (attached)."

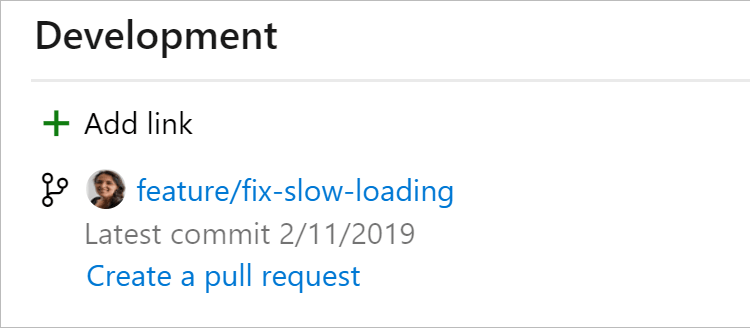

Do you link commits, branches, and PRs to a PBI?

To improve visibility into what work has been done, link your PBIs and tasks to the related commits, branches, and pull requests. Every commit, branch, and PR should be associated with a PBI.

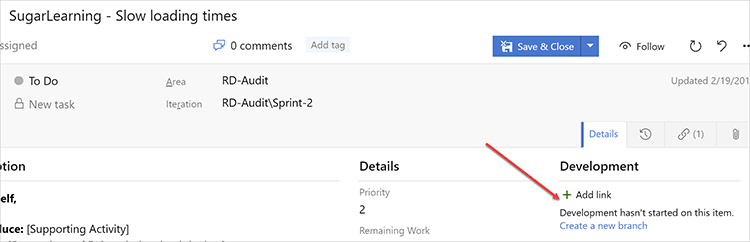

❌ Figure: Bad example - No linked commits, branches or pull requests

✅ Figure: Good example - Git branch linked to PBI in Azure DevOps

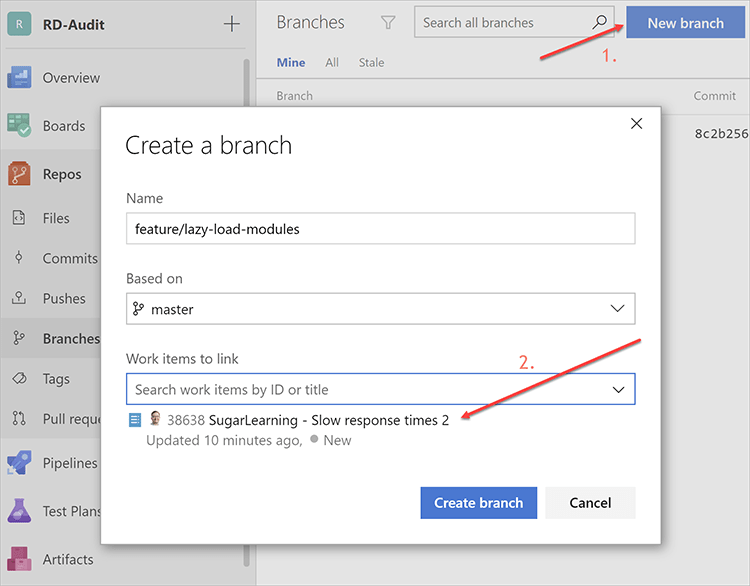

Using Azure DevOps

If you create branches through Azure DevOps, you can link them to a work item during the creation process.

✅ Figure: Good example - Using Azure DevOps to link PBI during creation

Learn more on this article: Linking Work Items to Git Branches, Commits, and Pull Requests.

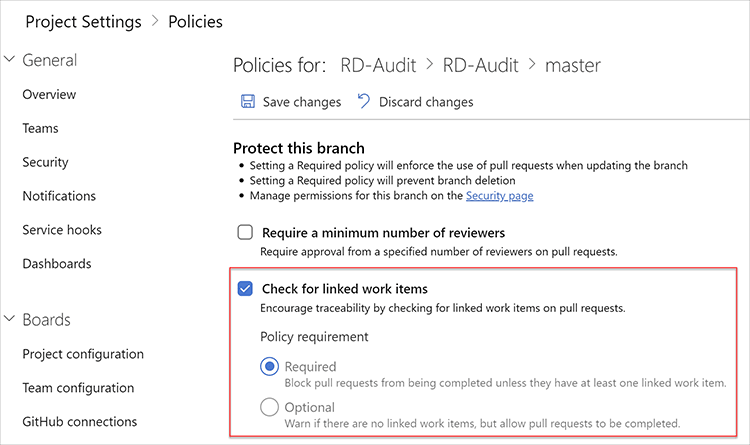

Automating rule enforcement

You can setup Branch Policies on your main branches to enforce this behaviour.

✅ Figure: Good example - Branch Policy on the master branch to enforce linked work items on pull requests

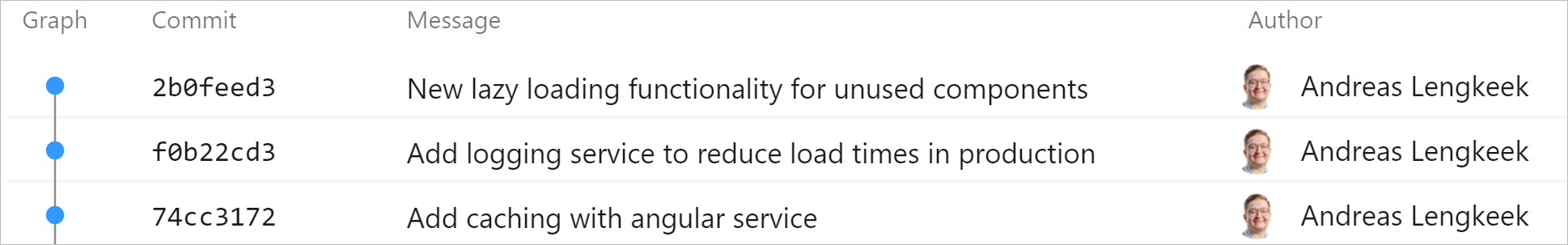

Do you document your failures for R&D?

Australian R&D laws require you to show the separate attempts you make when developing a feature that counts towards R&D.

For this reason, you should make sure to commit in between every attempt you make even if it does not have the desired affect to record the history of experimentation.

Example scenario

A developer is improving load times while migrating an MVC app to Angular. They create a new feature branch and begin development on their local machine.

Once they are done the developer commits all the changes they made and push it the remote repository. Using this method, the developer loses the history of experimentation and it will be difficult to prove for R&D.

❌ Figure: Bad example - Only the final solution is committed. Experimentation history not recorded

For the same scenario the developer makes sure to commit every separate attempt to reduce load times for their web application. This way, everybody knows what kinds of experimentation was done to solve this problem.

✅ Figure: Good example - Each attempt has a new commit and is not lost when retrieving history

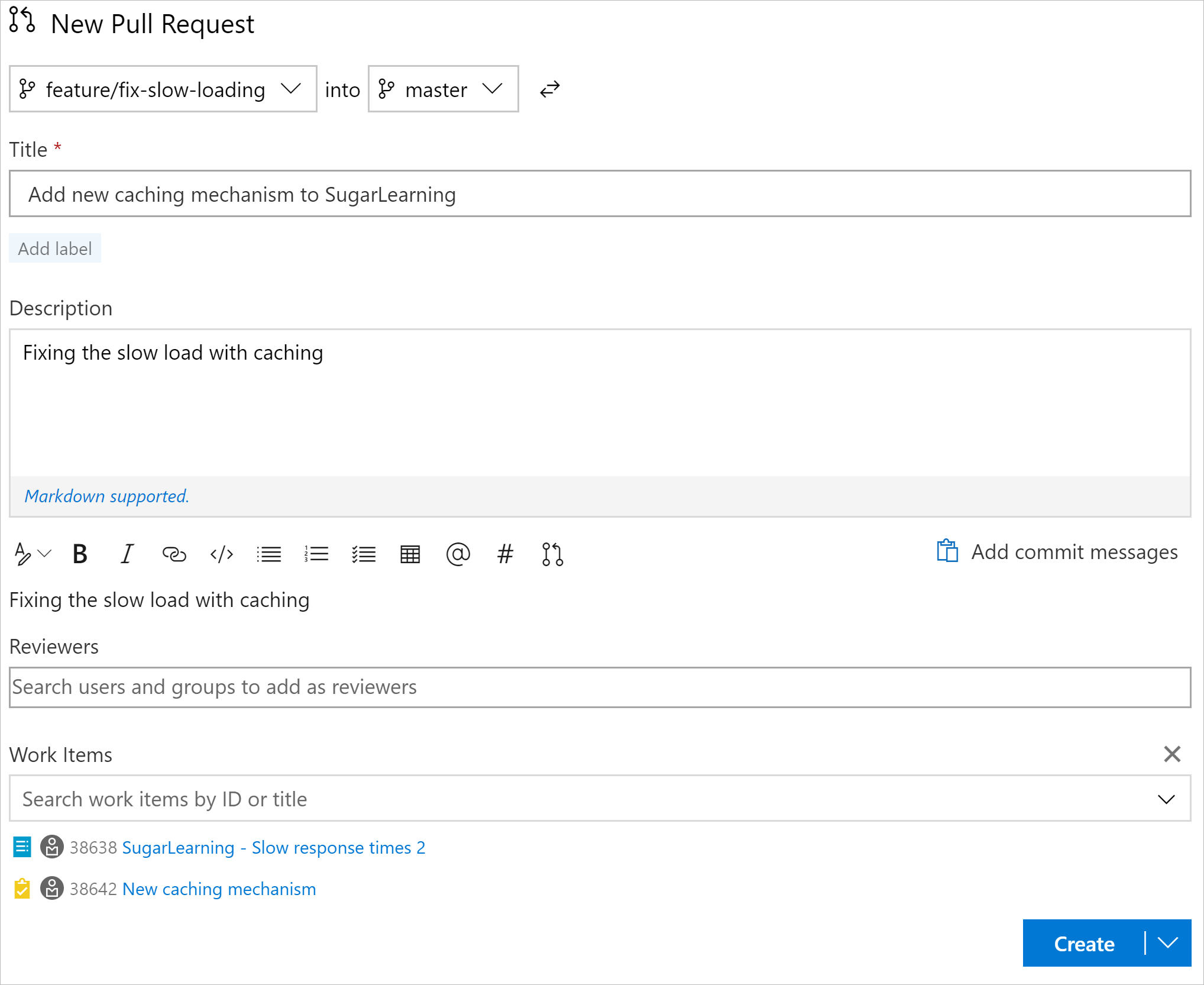

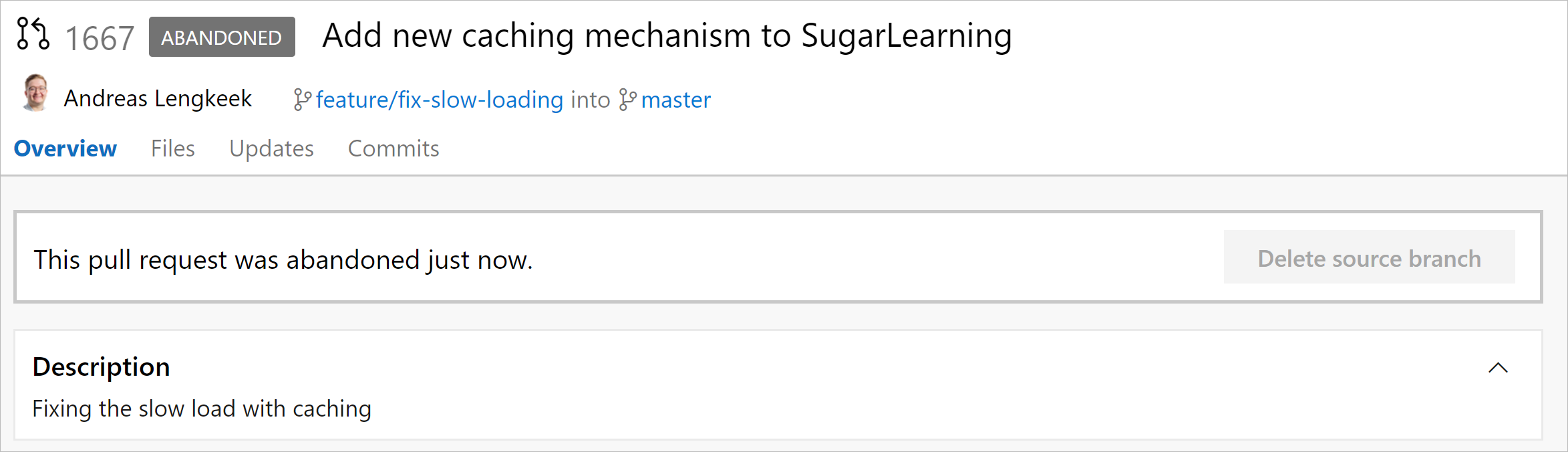

Save failed experiments using abandoned pull requests

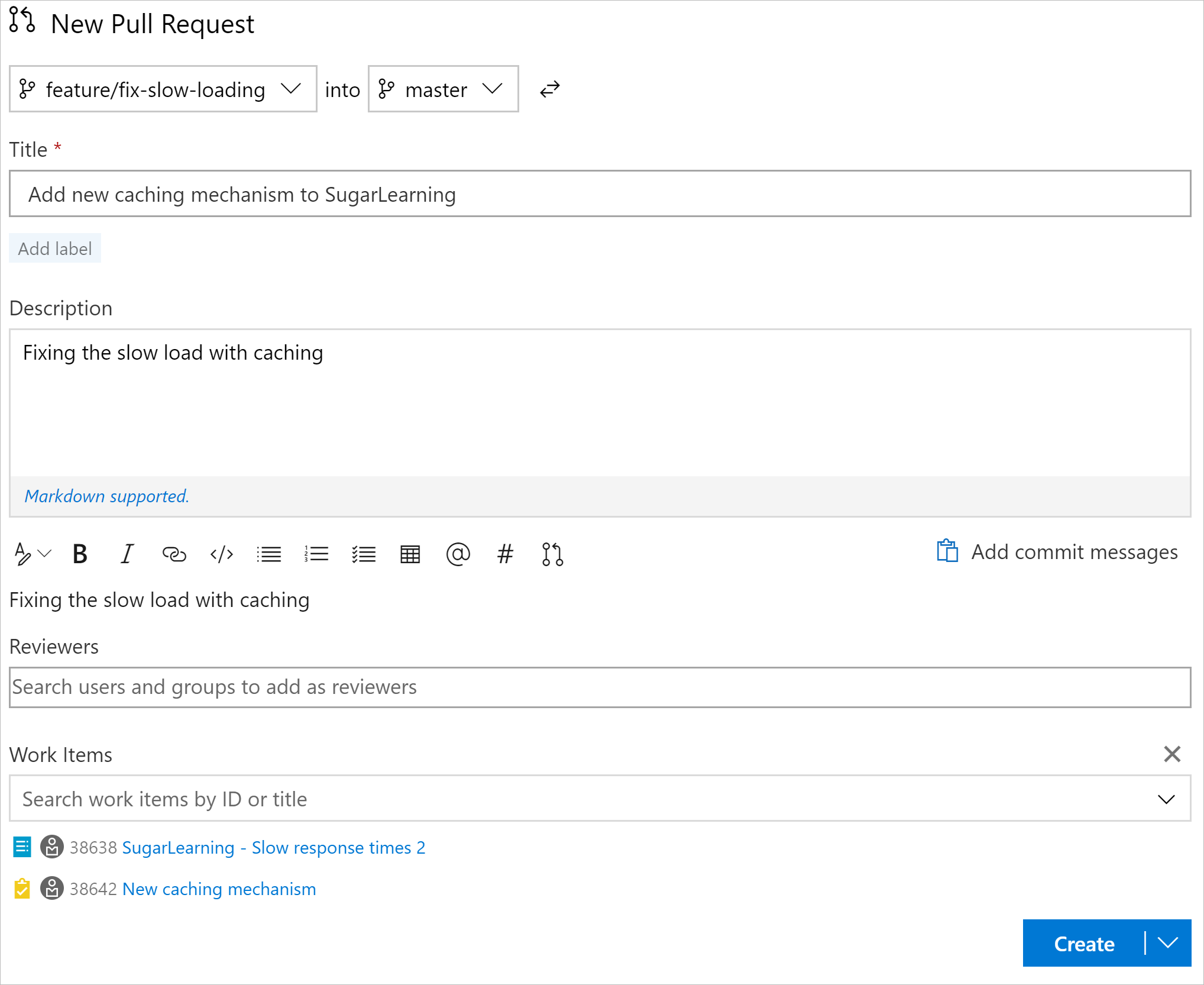

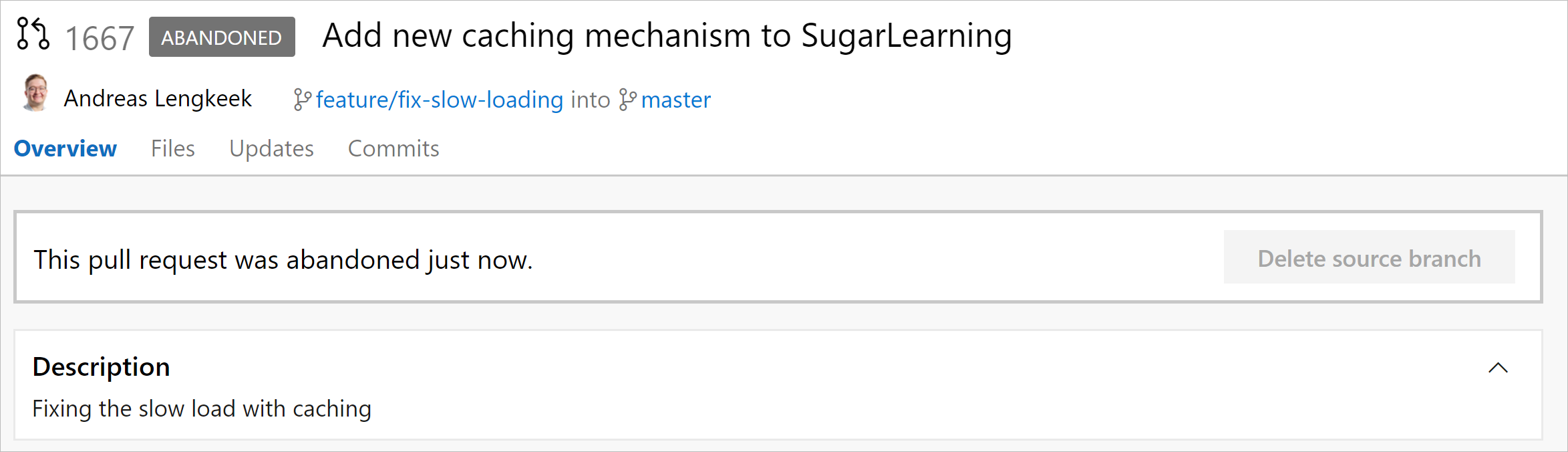

Assume you are creating a cool new feature. First you will create a new branch, create some commits, check it works, and submit a pull request. However, if you are not happy with the feature then don’t just delete the branch as normal. Instead, create a pull request anyway and set the status to Abandoned. Now, you can continue to delete your branch as normal.

This makes sure that we have a historical log of work completed, and still keeps a clean repository.

✅ Figure: Good example - Setup pull request for feature branch so that we have a history of the commits

✅ Figure: Good example - PR is abandoned with a deleted branch

Do you record your research under the PBI?

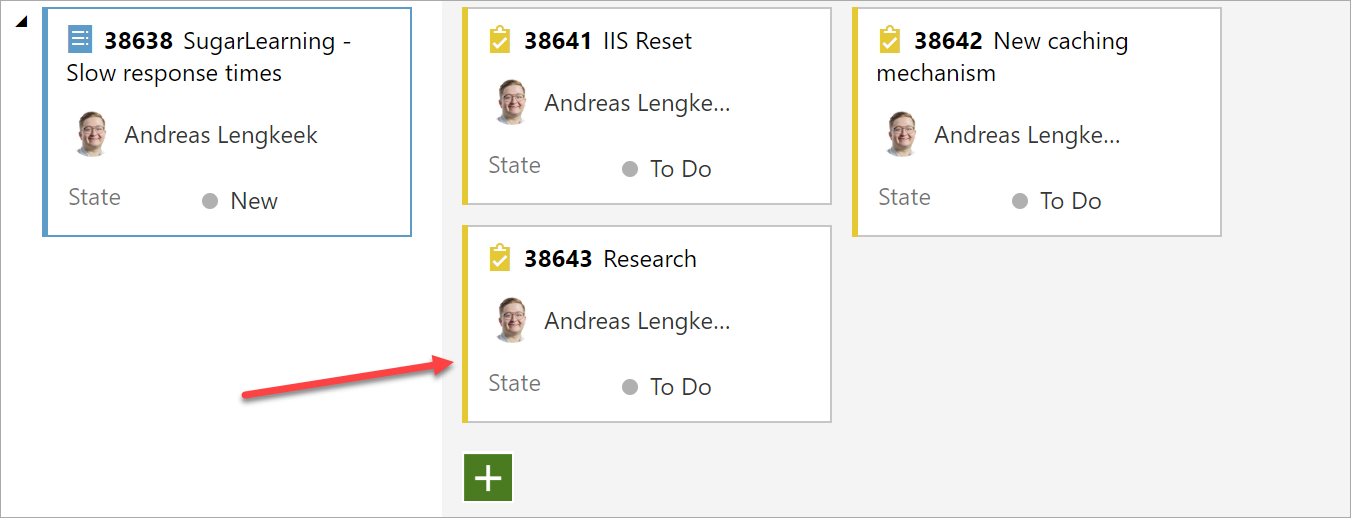

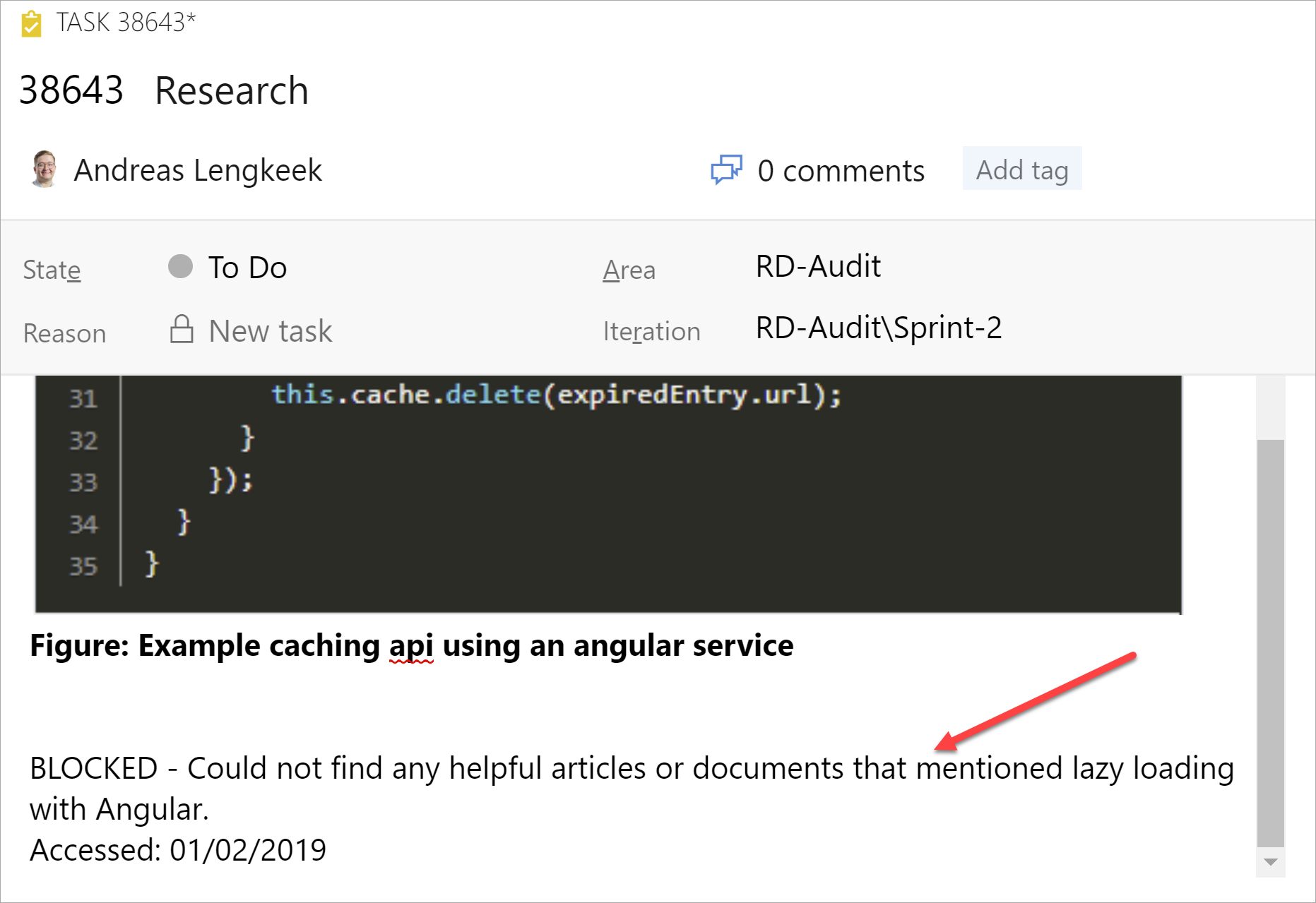

If you have done significant research on a topic, this should be documented.

Create a new task under the PBI and place these resources in the description with a short summary of what you got from this link.

✅ Figure: Good example - Task for research created under the PBI

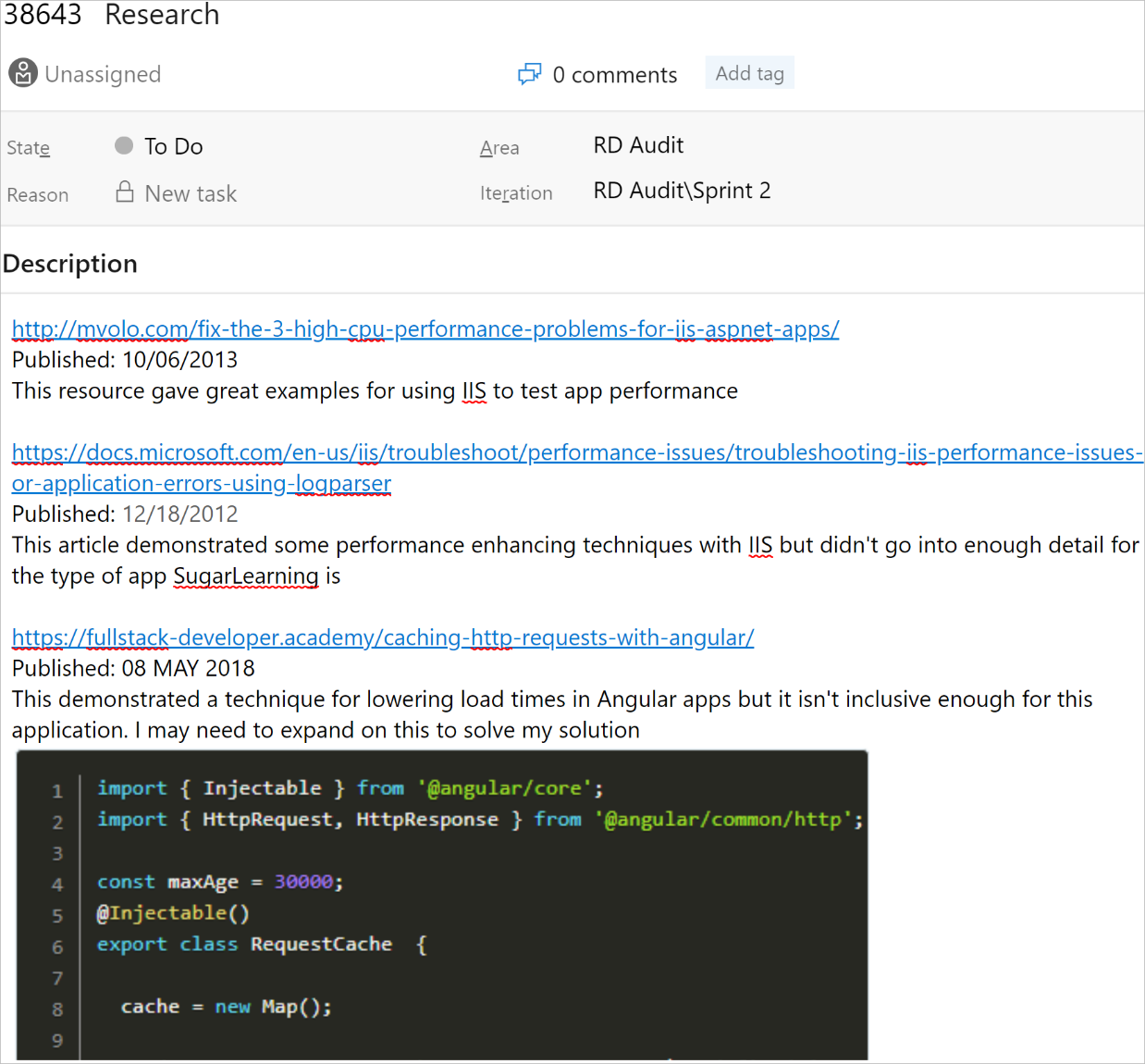

Each source you have used should be referenced here with the publish date and a short description of what you got from the content. If you take screenshots of the relevant sections then that is even better.

✅ Figure: Good example - Example research task on using caching to improve load times with SugarLearning

✅ Figure: Good example - If you don't find any suitable answers to your query, remember to make a short note of what you were looking for

Do you save failed experiments in abandoned pull requests?

Assume you are creating a cool new feature. First you will create a new branch, create some commits, check it works, and submit a pull request. However, if you are not happy with the feature then don’t just delete the branch as normal. Instead, create a pull request anyway and set the status to Abandoned. Now, you can continue to delete your branch as normal.

This makes sure that we have a historical log of work completed, and still keeps a clean repository.

✅ Figure: Good example - Setup pull request for feature branch so that we have a history of the commits

✅ Figure: Good example - PR is abandoned with a deleted branch

Do you understand the difference between a POC and MVP?

It is really important to understand the difference between a Proof of Concept (POC) and a Minimum Viable Product (MVP). It is also important that clients understand the difference so they know what to expect.

POC: Proving feasibility

A POC is developed to demonstrate the feasibility of a concept or idea, often focusing on a single aspect of a project. It's about answering the question, "Can we do this?" rather than "How will we do this in production?" POCs are typically:

- Quick and focused: Developed rapidly to test a specific hypothesis

- Experimental: Used to validate technical feasibility, explore new technologies, or demonstrate a concept

- Disposable: Not intended for production use; often discarded after proving the concept

POCs should be built, tested, and thrown away. They are not intended to be used in a production environment.

MVP: Delivering value

Conversely, an MVP is a version of the product that includes just enough features to be usable so stakeholders/users can provide feedback for future product development.

- End-to-end functionality: Covers a single feature or user flow in its entirety

- Production ready: Developed with more attention to detail, as it will be used in a live environment

- Foundation for iteration: Serves as a base to gather user feedback, validate assumptions, and guide future development

Examples

Consider a startup exploring the use of AI to personalize online shopping experiences. A POC might involve creating a basic algorithm to recommend products based on a user's browsing history, tested internally to prove the concept's technical viability.

Building on the POC's success, the startup develops an MVP that integrates this personalized recommendation engine into their shopping platform, deploying it to a segment of their user base. This MVP is monitored for user engagement and feedback, shaping the future roadmap of the product.

Best practices

- For POCs: Clearly define the concept you're testing. Limit the scope to essential elements to prove the hypothesis. Remember, POCs are not mini-products

- For MVPs: Focus on core functionality that delivers user value. Engage with early users for feedback and be prepared to iterate based on what you learn

Tip: Ensure your client has a clear understanding of the difference before starting development. This will help manage expectations and avoid surprises.

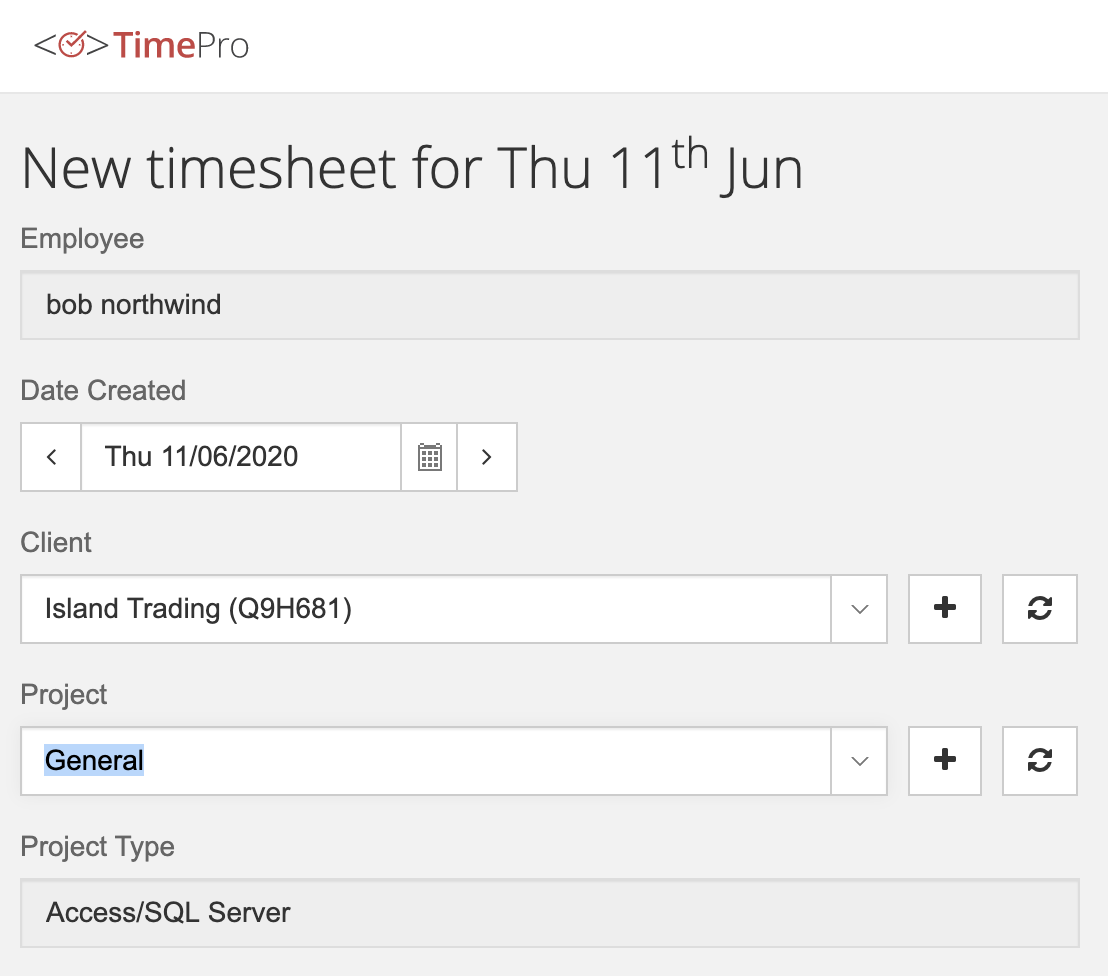

Do you avoid using "General" in timesheets?

Developers should avoid using "General" project in their timesheets.

"General" should only be used in rare cases, when you're doing something that:

- Doesn’t fit in any of the existing projects

- Isn’t worth creating a new project, as it won’t be a long term project or repeated in the future

If you’re using "General" in timesheets frequently, then it’s a problem.

❌ Figure: Bad example - "General" project or category

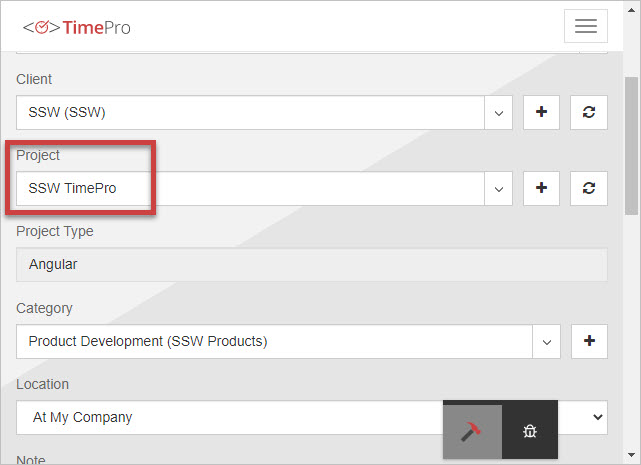

✅ Figure: Good example - Specific project or category