Do you know the potential security risks of using ChatGPT?

Updated by Lewis Toh [SSW] 10 months ago. See history

123

No component provided for introEmbed

Key points:

- Security measures by OpenAI:

- Encryption

- Access controls

- External security audits

- Bug bounty program

- Incident response plans

- Responsible data handling practices by OpenAI:

- Transparency about data collection purposes

- Data storage and retention policies (30 days)

- Controlled data sharing with third parties

- Compliance with regional data protection regulations

- Respecting user rights and control over their data

- ChatGPT is not confidential:

- All conversations are used as training data by default, but this can be turned off

- Users should avoid sharing sensitive information

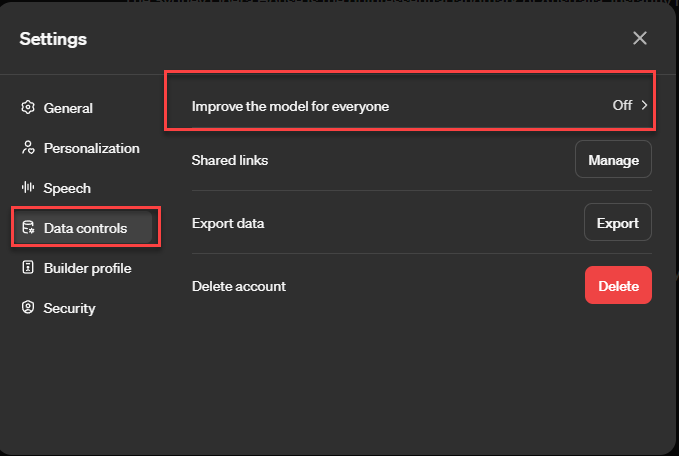

Figure: Toggle Your Name | Settings | Data controls | Improve the model for everyone to stop the model training on your data

- Potential risks of using ChatGPT:

- Data breaches

- Unauthorized access to confidential information

- Biased or inaccurate information generation

- Best practices for using ChatGPT:

- Do not share or submit sensitive or confidential information on ChatGPT, ever

- Review privacy policies of platforms using ChatGPT

- Use anonymous or pseudonymous accounts

- Monitor data retention policies

- Current regulations:

- No specific regulations for AI systems like ChatGPT

- Compliance with existing data protection and privacy regulations (e.g., GDPR, CCPA)

- Proposed AI Act could become the first comprehensive regulation for AI technologies

Always exercise caution when using ChatGPT and avoid sharing sensitive information, as data retention policies and security measures can only provide a certain level of protection.

Related rules

Need help?

SSW Consulting has over 30 years of experience developing awesome software solutions.